The Only Fan Hub

Musicians Need

- and it's Free!

Get Started

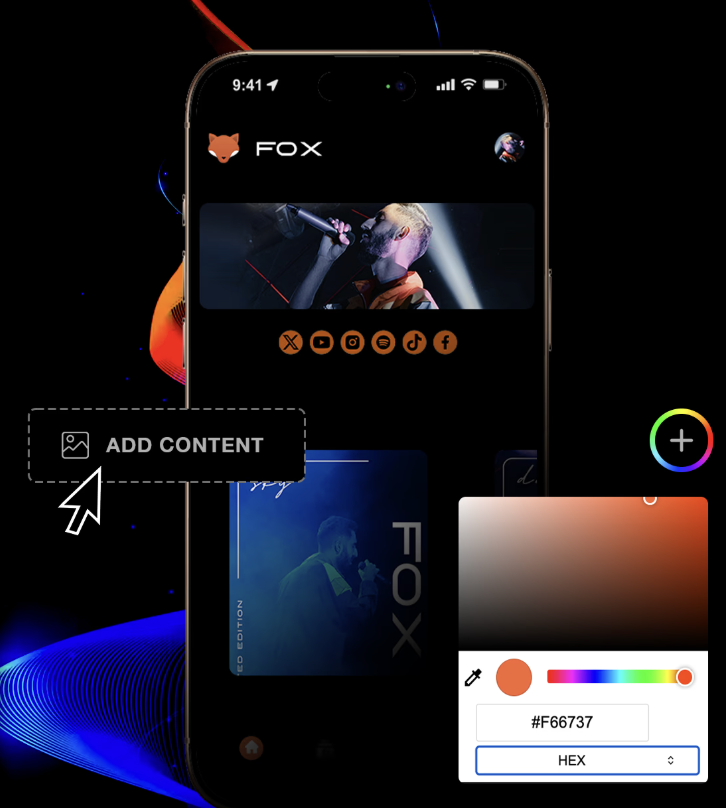

Take control of your music career

in minutes

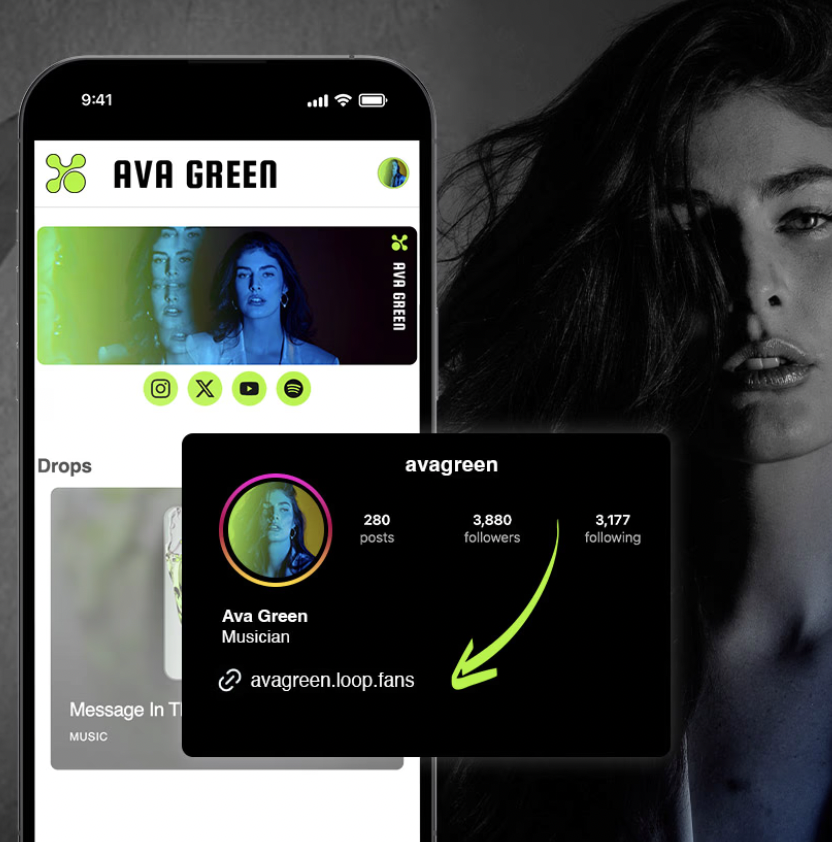

Grow your FanBase

Build and grow a dedicated audience with your own branded fan hub.

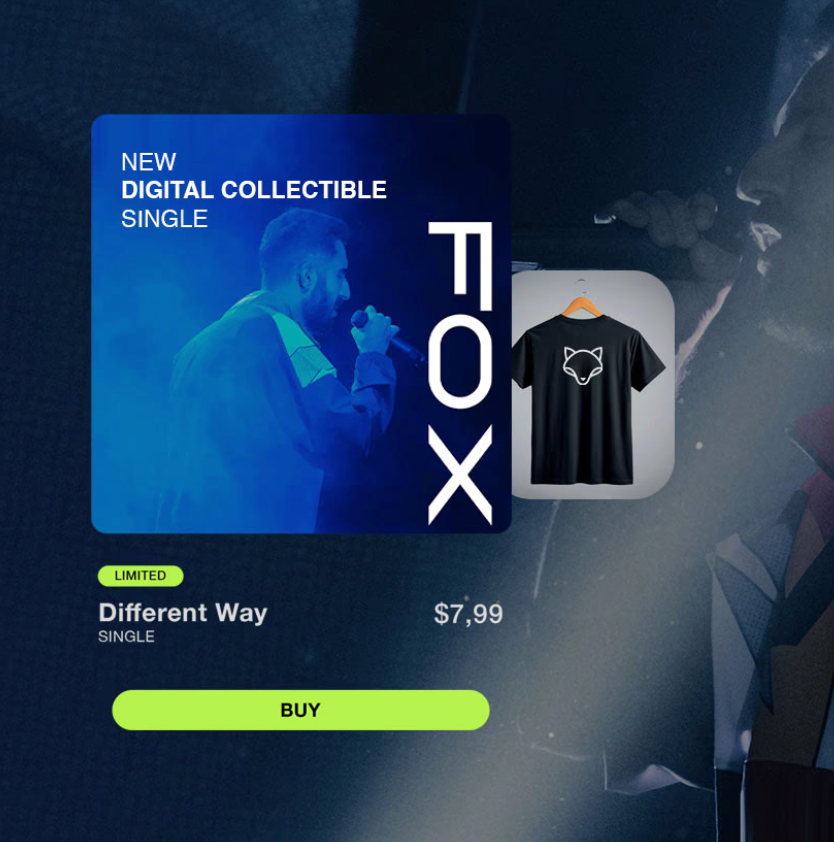

Sell your Music

Sell music, merch, and digital content directly to your fans.

Connect with Fans

Own your fan relationships and communicate directly.

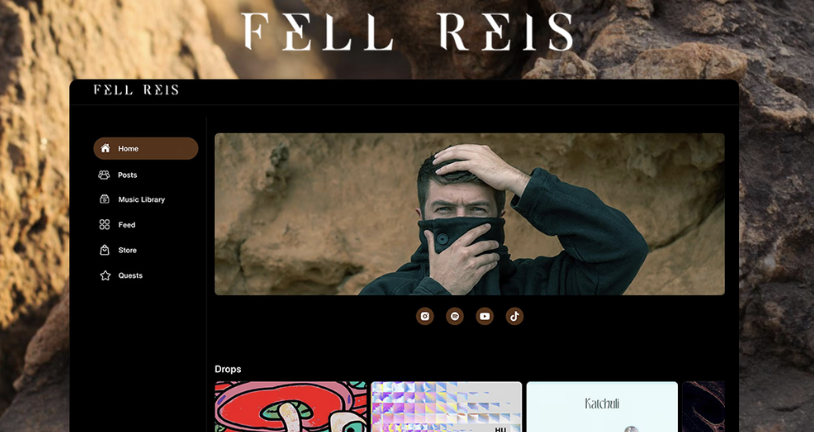

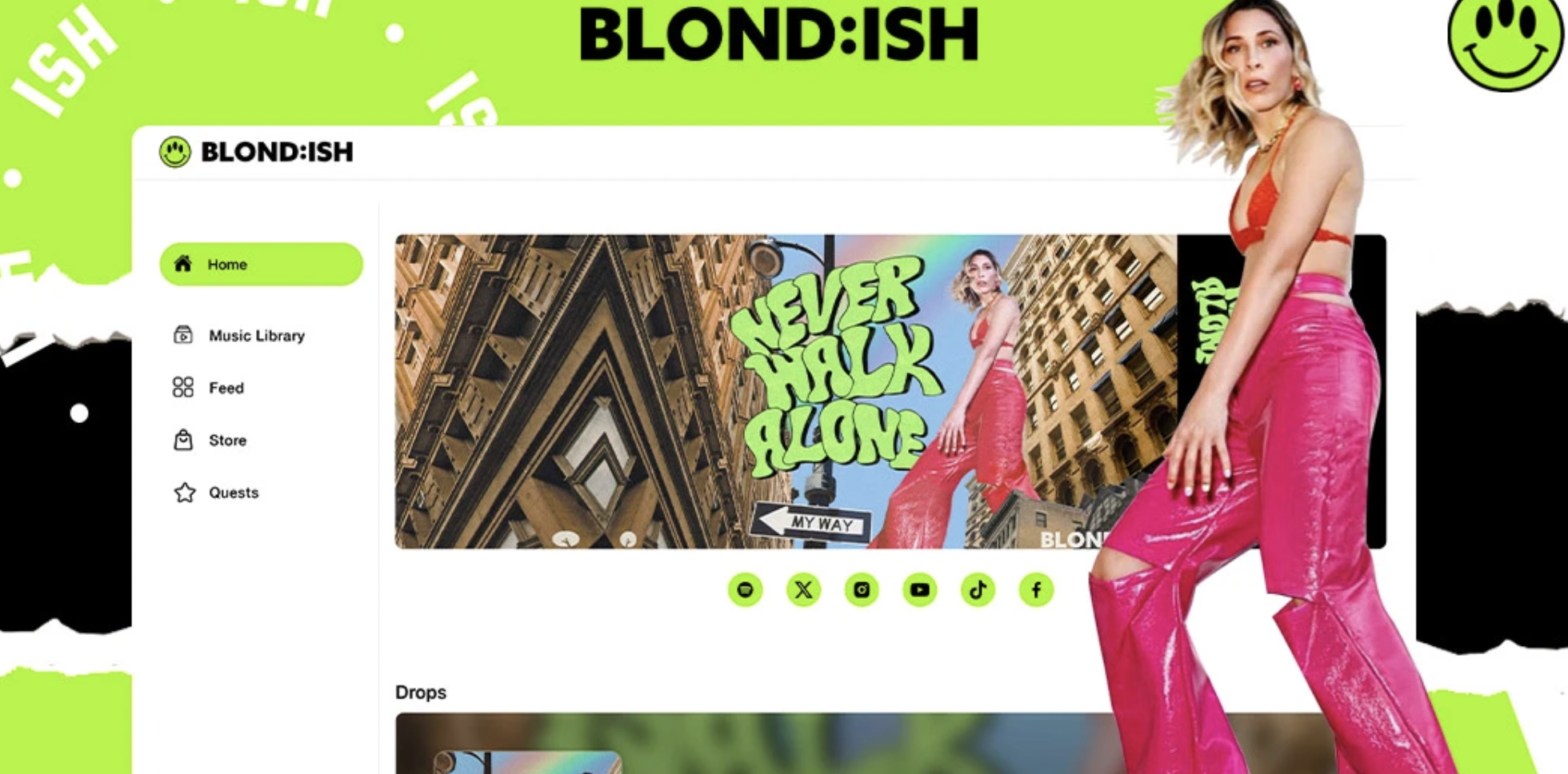

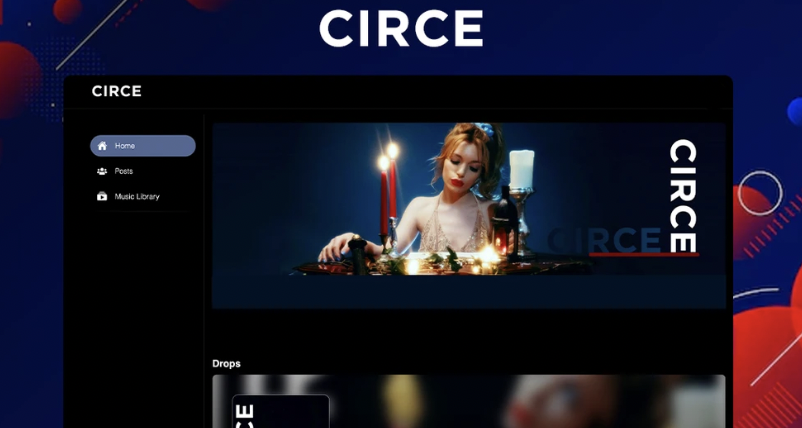

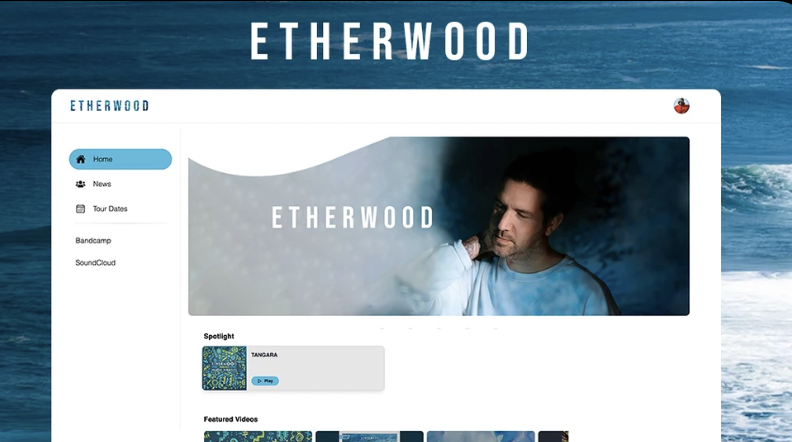

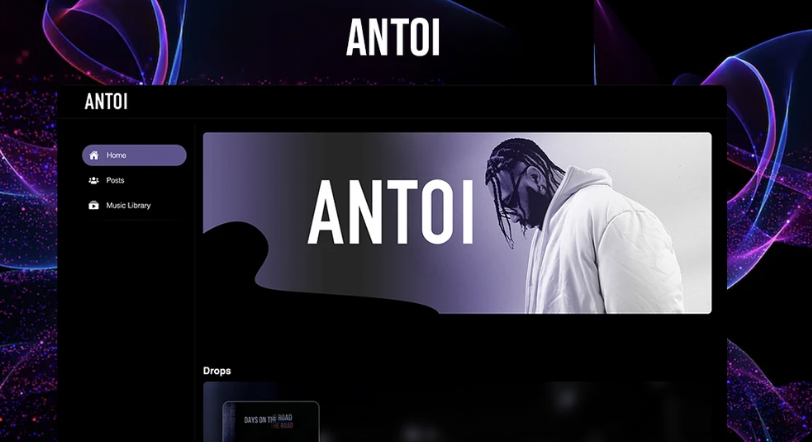

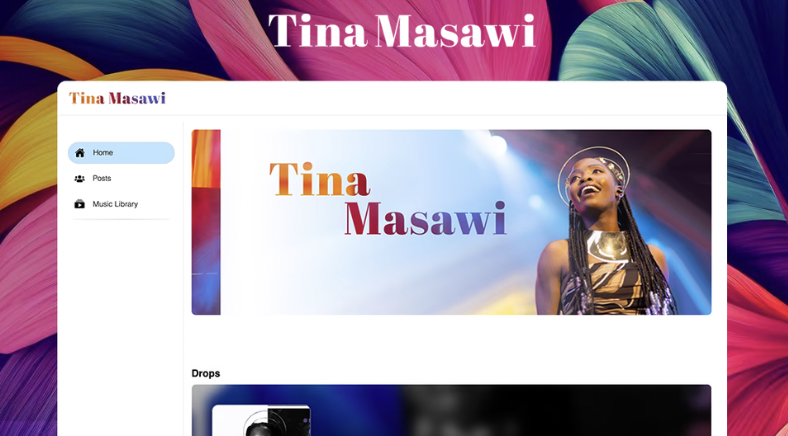

Used by Leading

and Emerging Artists

View All WebsitesUse it as a Link in Bio on all your socials to showcase your own brand and content.

Get Started

Escape the Algorithm –

sell music, merch, NFTs, or tickets directly to fans and make a fair living!

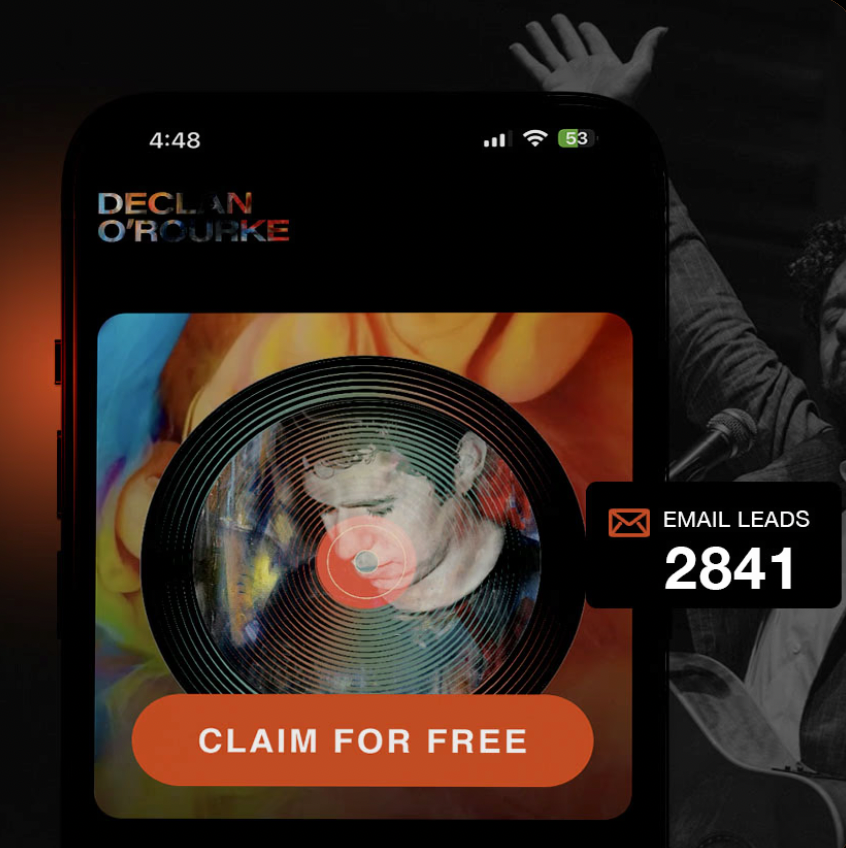

Get Valuable Fan Data to boost your marketing and grow your audience.

Get Started

What we replace

All tools combined

$297.00/ per monthWebsite Builder

Replaces: WordPress Squarespace

Link in Bio

Replaces: Linktree* KOMI

Email Marketing & Direct Messaging

Replaces: mailchimp Discord Laylo

e-Commerce

Replaces: shopify bandcamp

Membership Subscriptions

Replaces: PATREON

Music Library

Replaces: SOUNDCLOUD

Digital Collectibles & WEB3

Replaces: OpenSea

What our Artists are saying

"I didn't have my branding established but the Loop Fans team helped me put something together that really represents me. So happy with my website"

Samantha Ann

▲ samanthaann.loop.fans

"My Loop Fans Website is my official Link-in-Bio and Digital Store. All my main socials and content are easily found and it's fully branded with my own logo and colors"

Devitchi

▲ devitchi.loop.fans

"I replaced my previous site to use Loop Fans. I can manage it myself and easily create new music drops and let my fans know where I'm playing next."

Declan O'Rourke

▲ declanorourke.com

Create your

free website

now!

Get Started

Most Asked Questions